How does dust affect lidar recognition performance?

In autonomous driving systems, lidar serves as a crucial sensing hardware component and has become the primary choice for many automakers. While lidar can capture depth information about the environment, dusty conditions cause significant signal scattering and attenuation, a surge in irregularly distributed noise within point clouds, blurred or partially lost target outlines, a marked reduction in effective detection range, and occasional false obstacle echoes. These issues degrade target detection and tracking accuracy, impact positioning and path decision-making, and may even trigger false emergency braking. How does dust, this seemingly insignificant particle, impact LiDAR recognition performance?

How does LiDAR’s “eye” function?

Before discussing why dust affects LiDAR recognition, we must first clarify how LiDAR operates.

LiDAR (Light Detection and Ranging) is an active sensor that emits laser beams. These beams reflect off surrounding objects and return to the sensor. By measuring the time each laser pulse takes to travel from emission to return, LiDAR calculates the distance and direction of target objects, constructing a three-dimensional point cloud map of the environment.

While this design yields highly accurate environmental data under ideal conditions, it becomes significantly impaired by obstacles like raindrops, smoke, or dust. These particles interfere with the laser beam, degrading the quality of the returned signal.

How does dust interfere with laser signals?

For human drivers, dust in the environment poses minimal disruption. For LiDAR, however, dust is a major source of interference.

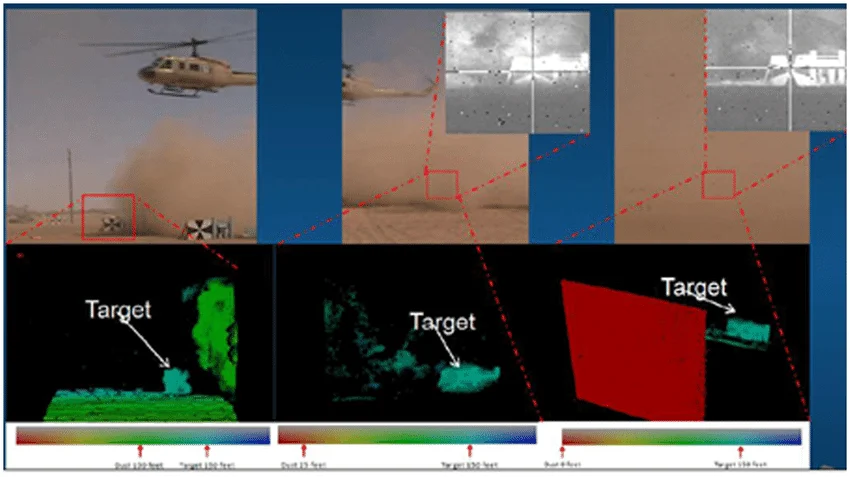

When laser beams encounter dust particles in the air, scattering occurs. Light that should travel in a straight line is deflected by these particles. This scattering weakens and blurs the returning signal, and some light may not reach the receiver at all. The greater the dust concentration, the more severe the spot scattering becomes, resulting in weaker detectable signals. This ultimately manifests as increased noise in point cloud data, blurred object contours, and even false readings indicating no obstacles exist.

Beyond deflecting light, dust also causes energy loss during beam propagation, reducing the signal strength received by the radar receiver. Once signal strength approaches the sensor’s noise threshold, distinguishing genuine reflections from background noise becomes challenging, directly compromising ranging accuracy and the ability to identify distant objects.

Dust also contaminates the LiDAR viewing window, as both transmitted and received beams pass through a transparent protective glass or window. If dust accumulates on this window surface and thickens over time, the laser beam undergoing diffuse reflection and absorption while passing through this layer of contamination will have its outgoing and returning signals weakened or even deflected. This physical obstruction significantly impacts the overall quality of the point cloud, not only causing inaccurate distance measurements but also potentially leading the system to mistakenly detect obstacles ahead or fail to recognize real objects altogether.

Practical Manifestations of Dust’s Impact on Recognition Quality

Understanding how dust interferes with laser signals, let’s examine the consequences of such interference in real-world applications.

In autonomous driving or robotic navigation systems, LiDAR helps vehicles comprehend their surrounding three-dimensional space. Poor-quality point cloud data makes it difficult for the system to accurately distinguish between open road ahead and an obstacle. Such misjudgments may cause premature braking, overlooking actual obstacles, or even executing incorrect avoidance maneuvers. This interference becomes particularly pronounced in dusty environments like factories or mining sites, where numerous small particles suspended in the air continuously disrupt laser propagation.

Another common manifestation is increased noise in point clouds. Under clear, dust-free conditions, the point cloud returned by LiDAR primarily originates from actual object surfaces, with points arranged in an orderly fashion reflecting true boundaries. However, when air is filled with dust, many laser beams scatter off dust particles before returning. These chaotic reflected signals generate numerous “noise points” in the point cloud that bear no relation to actual objects. These meaningless points interfere with subsequent geometric calculations and target recognition algorithms, reducing the overall perception accuracy of the system.

Dust’s impact on detection range is also significant. In dusty environments, the rapid attenuation of laser signal energy shortens the effective detection distance. This means that while objects 100 meters away can be accurately identified under normal conditions, dusty conditions may limit detection to just 50 meters or even shorter ranges. For high-speed autonomous vehicles, such perception blind spots pose potential safety risks.

Some dust particles may be mistaken for actual obstacles. For instance, tiny particles suspended in the air can reflect faint signals back, which algorithms might misinterpret as small obstacles, triggering false emergency braking or obstacle avoidance actions. In such cases, the system may “appear” to be active, but it is actually responding to obstacles that do not exist.

Mitigating Dust Impact on Lidar

Numerous countermeasures against dust interference have been proposed and implemented.

One approach focuses on hardware modifications to reduce dust adhesion to the viewing window. By selecting high-transmittance, anti-soiling materials for the radar housing and coatings, dust accumulation on the protective cover can be minimized, ensuring minimal obstruction of the laser beam. For instance, some applications utilize covers with nano-scale anti-soiling coatings, which repel dust particles and extend cleaning intervals.

On the software front, the industry has developed specialized filtering and recognition algorithms. These algorithms analyze the intensity, distance, and surrounding point distribution of laser echoes to identify points likely caused by dust scattering noise, then remove them from the point cloud data. Such “dust removal algorithms” can partially restore accurate point cloud information of the real environment while reducing the impact of false obstacles.

Another approach is sensor fusion, which integrates LiDAR with other sensor types. Cameras provide visual information to distinguish dust from real targets, while millimeter-wave radar offers superior penetration through rain, fog, and dust. Combining these creates a more robust perception system that performs significantly more reliably than standalone LiDAR in complex environments.

In certain extreme scenarios, active cleaning measures are incorporated, such as attaching air blowers, brushes, or other mechanical cleaning modules to the exterior of the LiDAR unit to periodically remove dust from the sensor window. However, these solutions come with higher costs and maintenance requirements, making them primarily suitable for industrial or specialized robotic environments.

Final Thoughts

Dust impacts lidar in multiple ways. It not only disrupts laser propagation paths but also reduces signal strength, contaminates sensor windows, and ultimately leads to increased point cloud data noise, decreased recognition accuracy, reduced detection range, and even misidentification of obstacles. For safety-critical applications like autonomous driving, these impacts cannot be overlooked.